Large language models (LLMs) are now dependent less on their parameter count and more on the quality of their instruction data to deliver high-performance results. Advanced annotated strategies for LLM models have now evolved to include many forms of constraint-based annotation including chain-of-thought and negative constraints. One can now fine-tune LLMs by implementing these types of advanced annotation strategies.

Introduction: Why annotation strategy defines LLM performance

We are currently experiencing an evolution in the way we view large language models (LLM). Rather than focusing on the size of our models, we are now focused on the quality of the data that we provide to our models during training. When it comes to fine-tuning LLMs, the difference between a poor-performing chatbot and a performing chatbot capable of assisting users can be directly attributed to the quality of the instruction dataset provided for model alignment during training.

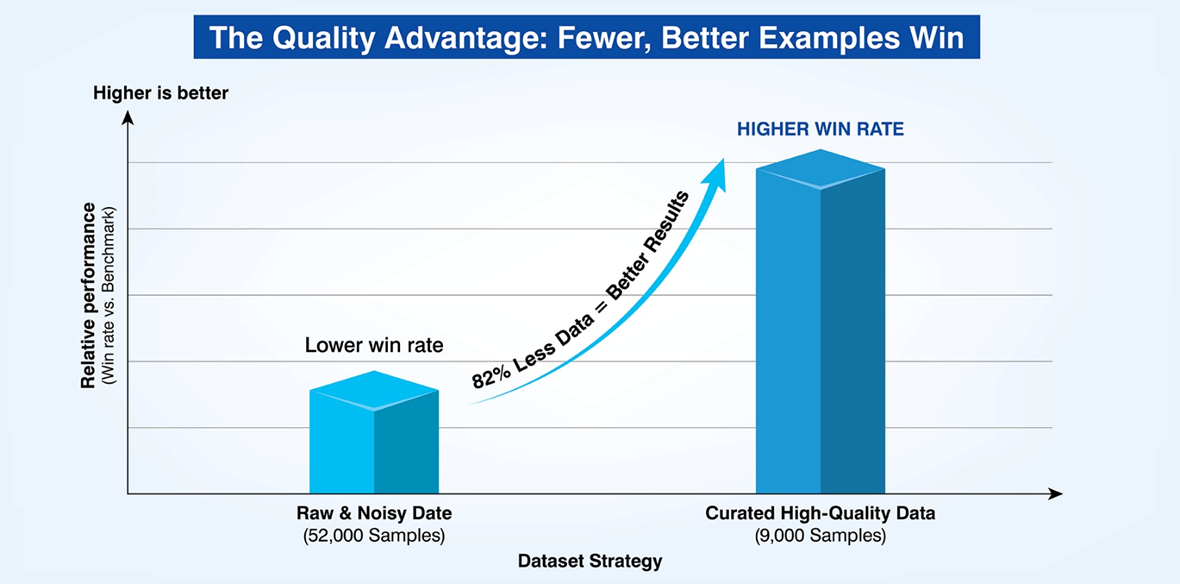

Studies have demonstrated that using a reduced amount of raw data (often over 80% reduction) and providing highly accurate annotations greatly improve the win rate of your LLM model when compared to the top benchmarks available.

Fine-tuning LLMs is now based on superior quality data, not massive amounts of data.

Superior performance is now achieved through rigorous data curation, not massive data accumulation.

Poorly annotated models do not know how to respond to prompts appropriately. Poorly annotated models also have difficulty responding to high levels of stress. A poorly annotated model does not have the ability to reason due to the lack of nuance in the training data. We must begin to view annotation as a model alignment technique. As such, we must view annotation as a method of controlling how a model will respond to input prompts, adhere to safety guidelines and process information.

What Is LLM fine-tuning and alignment?

To understand why strategy matters, we must clarify what we are building. There are three main approaches: supervised fine tuning, instruction tuning, and model alignment.

Supervised Fine-Tuning (SFT): This is the process of training a pre-trained base model on a smaller, highly curated dataset of instruction-response pairs. It teaches the model how to format its knowledge into useful answers.

Instruction Tuning: A subset of SFT where the model learns to generalize across tasks by seeing diverse instructions (e.g., "Summarize this," "Translate that," "Write code for X").

Alignment: This ensures the model is helpful, harmless, and honest (HHH). It constrains the model to act within human ethical boundaries.

There is a sharp distinction between the approaches in labeling. Generic labeling just tells what an object is (e.g., "this is a cat"). But data annotation for LLM also defines how an intelligent agent should behave when encountering the object.

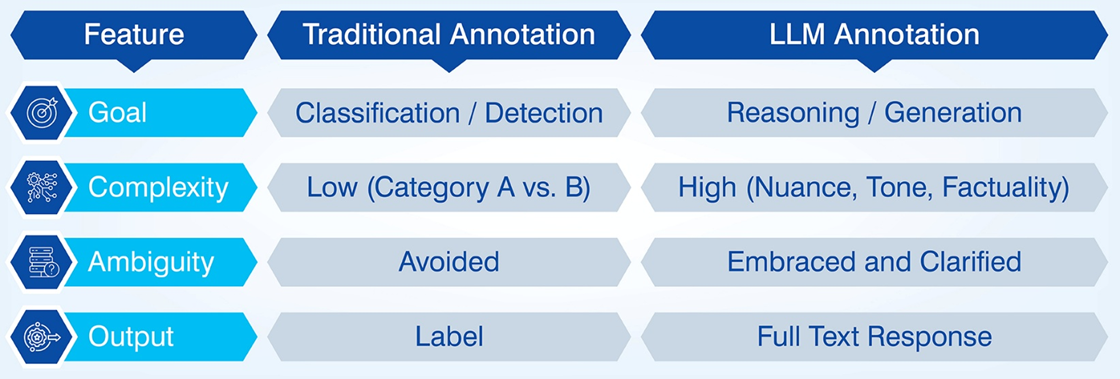

Why Traditional annotation fails for Large Language Models

Legacy annotation workflows, which were originally designed to support simple sentiment analysis or object detection, fail miserably when applied to Generative AI (i.e., LLMs). Legacy workflows were primarily based on simple labeling techniques, which included either a binary choice (yes/no, etc.) or simple tagging. They in fact, require deep semantic understanding.

Traditional methods fail because they cannot handle:

Ambiguity: Human language is rarely black and white.

Subjectivity: "Write a polite email" varies by culture and context.

Context Sensitivity: A statement that is true in 2020 might be false in 2025.

For example, a sentiment label of "Positive" is useless if the model needs to explain why a customer is happy and suggest a follow-up action.

Top 7 annotation strategies that ensure superior LLM fine-tuning and alignment

If you want to get better than average performance from your LLM models, then you must implement structured strategies that specifically target model behavior.

Strategy 1: Instruction-centric annotation for prompt alignment

Instruction-centric annotation provides base for instruction tuning. Creating annotated data that reflects real-world user prompts and queries helps create high-fidelity instruction response pairs that help tune the model.

You'd want the prompt pairs to follow actual user patterns (i.e., multiple steps, multi-steps, etc.). And also create examples that outline output option limits and constraints (e.g., "answer only in JSON format").

Another important aspect of instruction-centric annotation is separating the System, User and Assistant roles. Annotators can help a model understand the importance of respecting system prompts (instructions on how to behave) versus user prompts (specific requests).

By doing so, engineers can enhance the security of their models.

Strategy 2: Domain-specific semantic annotation for knowledge adaptation

Most generic models are ineffective in specialized domains, such as law, medicine and engineering. The goal of domain-specific semantic annotation is to go beyond superficial grammar and focus on the intent of the domain.

Domain experts must classify data for technical accuracy. For example, in a legal domain, annotators may classify clauses as "indemnification clause with specific liability caps". In a technical support domain, they would focus on mapping issues and troubleshooting flows.

This enables a model to handle domain-specific jargon and implicit assumptions and adapt to domain knowledge without catastrophic forgetting (model losing its general knowledge to learn new terms).

Strategy 3: Conversational flow annotation for multi-turn reasoning

Most users do not simply submit a single prompt. Users typically ask follow up questions. The purpose of conversational flow annotation is to annotate contextual retention and turn dependency.

Annotators build datasets that require the "Assistant" to retain information from previous turns. Annotators also build datasets that allow the "Assistant" to model clarifications (e.g. did you mean X or Y?), refusals (e.g. I am unable to answer that question), and recovery paths (e.g. when the user changes the subject).

Conversational flow annotation is essential for virtual assistants. Failure to utilize conversational flow annotation results in a model treating each new query as if it were a blank slate, resulting in disjointed and frustrating user experiences.

Strategy 4: Chain-of-thought and reasoning-aware annotation

Traditional annotation provides the solution to a problem. Chain-of-Thought (CoT) annotation provides the logical path to the solution.

Annotators must write out the intermediate reasoning steps before providing the solution to a problem. For example, if an annotator is providing a math word problem, the annotation would include the equation setup and the step-by-step calculations to arrive at the solution.

Chain-of-Thought annotation should be utilized for complex tasks, such as legal research or technical diagnostics. Chain-of-Thought annotation trains a model to think about how to solve problems, not just what to say. This results in improved performance in few-shot testing, where a model must address an unknown question.

Strategy 5: Using contrastive and negative annotation to reduce hallucinations

For humans, as well as AI models, knowing what not to say is just as important as knowing what to say.

This strategy uses contrastive pairs to target hallucinations. Hallucinations occur when a model makes something up when there is no data available.

You annotate examples where the correct response is to indicate a lack of knowledge ("I do not know that.") instead of making something up. This transparency is crucial for aligning the model for safe behavior

By labeling harmful prompts with refusal answers, you teach the model to politely but firmly refuse to respond to dangerous or unethical prompts or queries.

Strategy 6: Fine-tuning-aware annotation for LoRA, PEFT, and full training

All fine tuning is not created equal.

Parameter Efficient Fine Tuning (PEFT) techniques such as Low Rank Adaption (LoRA) modify fewer parameters. This strategy takes the fine tuning method into account when creating the dataset.

In the case of LoRA, you typically need less, but more diverse and of higher quality examples since the "learnable surface" is smaller. With full fine tuning, you may need more examples to prevent overfitting and push for LLM alignment.

As always, there is a tradeoff between signal density and cost. High-density, complex annotations are expensive but needed to be effective for LoRA.

Strategy 7: Evaluation-driven annotation loops for continuous alignment

Annotation is not a one-time thing. It is a loop. This strategy ties the results of the model evaluations back to the creation of data.

Each time the model fails in production (for example, a retrieval miss or a bias issue), those failures become the specification for the next round of annotations. You study the errors to develop additional instruction pairs that fill in the gaps caused by the model's deficiencies. Then retrain.

This creates a data flywheel where the model guides you through the next steps of data annotation. It communicates its shortcomings and you can improve it accordingly without any guessing about its needs.

Conclusion: Annotation as a strategic lever for LLM alignment

People treat data annotation for LLM too often as just a pre-processing activity. However, we believe it should be viewed as an ongoing optimization function.

Using the approaches described here (from CoT to contrastive pairs) allows your organization to "program" your model using data. Structured annotation leads to improved downstream generalization, lower costs for inference (due to shorter prompts) and safer outputs.

For organizations seeking to deploy production-grade AI, working with experienced data service providers such as Hitech BPO enables the complex annotation process to scale as an integrated part of your engineering process. By considering data services as part of your engineering stack, you enable your LLM to be capable, safe and productive in the wild.

Write a comment ...